Results

The project Unified Deep Learning Benchmark for Satellite Image Restoration and Generation focuses on developing a standardized and reproducible benchmarking framework for evaluating modern deep learning models used in satellite image processing. In current research, different datasets, preprocessing methods, and evaluation metrics are often used independently, making it difficult to fairly compare results across models and tasks.

To address this limitation, the proposed system provides a unified evaluation pipeline that supports multiple satellite image processing tasks, including Cloud Removal (CR), Super-Resolution (SR), and High-Definition (HD) image generation. The framework integrates widely used remote sensing datasets such as AllClear, SEN12MS-CR-TS, WorldStrat, PROBA-V SR, and SAT25K, while applying consistent normalization, spectral alignment, and metric evaluation procedures.

The goal of this project is to ensure fair comparison, reproducibility, and transparency in satellite image restoration and generation experiments by allowing different models to be tested under the same conditions. By combining dataset handling, model evaluation, metric computation, and visualization into a single system, the project provides a reliable tool for researchers working in remote sensing and deep learning.

This unified benchmarking approach helps improve the consistency of experimental results and supports the development of more accurate and reliable satellite image processing methods.

The Unified Deep Learning Benchmark for Satellite Image Restoration and Generation is designed as a modular and extensible benchmarking framework that enables consistent evaluation of deep learning models across multiple satellite image processing tasks. The system integrates dataset handling, preprocessing, model execution, metric computation, visualization, and experiment logging into a single unified pipeline.

The framework supports multiple public satellite datasets and ensures that all inputs are processed using the same normalization rules, spectral band alignment, and validation procedures. This guarantees that different models can be evaluated under identical conditions, eliminating inconsistencies caused by dataset-specific preprocessing or incompatible evaluation protocols.

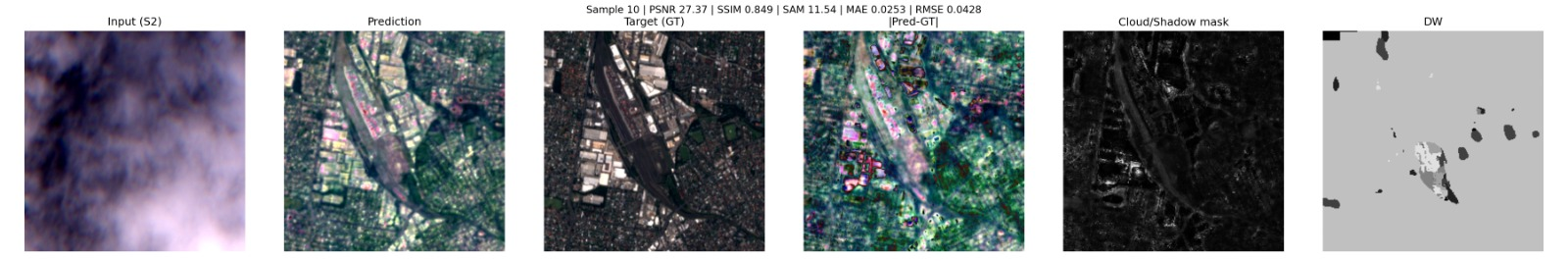

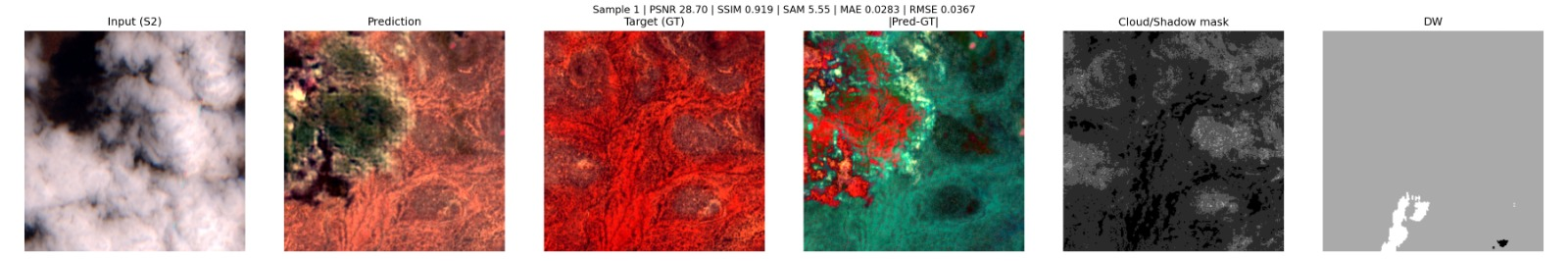

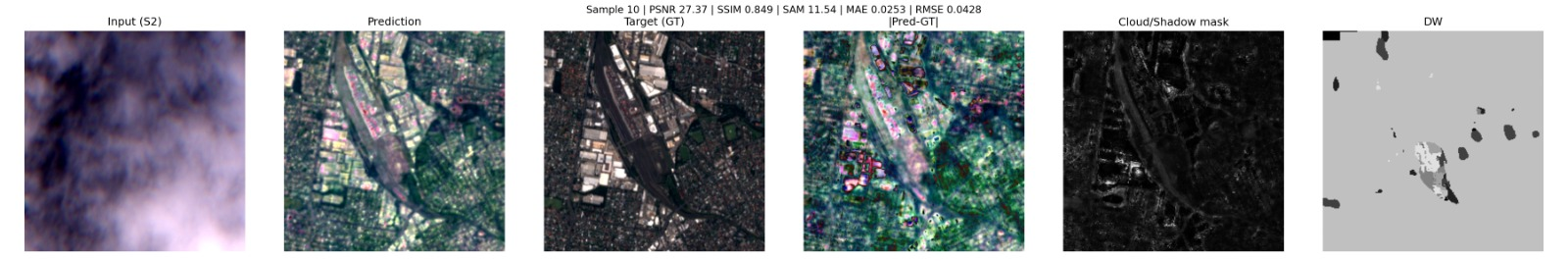

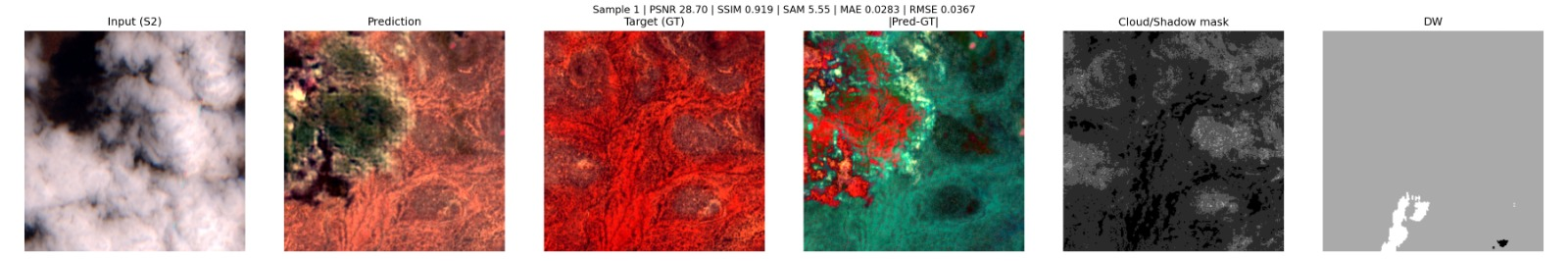

The benchmarking pipeline allows execution of baseline and custom deep learning models for cloud removal, super-resolution, and high-definition image generation, while automatically computing standardized evaluation metrics such as PSNR, SSIM, SAM, ERGAS, and other quality measures. All results are saved in structured formats, enabling reproducible experiments and reliable comparison between models, datasets, and preprocessing settings.

In addition to quantitative evaluation, the system provides visual comparison tools that display input images, model outputs, and reference images side-by-side, along with difference maps and zoomed regions for detailed analysis. Experiment configurations, dataset versions, and evaluation parameters are recorded automatically to ensure transparency and repeatability of results.

By combining these components into a single unified environment, the project provides a practical and research-oriented benchmarking solution that simplifies satellite image evaluation workflows and supports more consistent and scientifically reliable experimentation.

This section contains the main materials related to the project, including the final report, source code, datasets, system diagrams, and external tools used during development. All resources are provided to support transparency, reproducibility, and clear understanding of the benchmarking framework.

Source code of the unified benchmarking framework, including dataset loaders, evaluation pipeline, and visualization tools.

OpenOur framework integrates the following public satellite datasets for comprehensive evaluation across different tasks and image types.

Cloud removal dataset with paired cloudy and cloud-free satellite images for evaluating cloud removal algorithms.

Sentinel-2 multi-spectral cloud removal time series dataset for temporal consistency evaluation.

Global stratified satellite imagery dataset for diverse geographic and seasonal representation.

Super-resolution dataset from PROBA-V satellite for evaluating high-resolution image generation.

Large-scale satellite image dataset with diverse resolutions and processing tasks.